Fair Face Recognition (ECCV'20)

Dataset description

Quick Overview

The task: The participants will be asked to develop their fair face verification method aiming for a reduced bias in terms of gender and skin color (protected attributes). The method developed by the participants will need to output a list of confidence scores given test ID pairs to be verified (either positive or negative matches). Both the bias and accuracy of the method will be evaluated using the provided face dataset (plus individual protected and legitimate attributes and other metadata, as well as identity ground truth annotations).

The dataset: We present a new collected dataset with 13k images from 3k new subjects along with a reannotated version of IJB-C [1] (140k images from 3.5k subjects), totalling ~153k facial images from ~6.1k unique identities. Both databases have been accurately annotated for gender and skin colour (protected attributes) as well as for age group, eyeglasses, head pose, image source and face size.

[1] IARPA Janus Benchmark - C: Face Dataset and Protocol. Brianna Maze etal.; International Conference on Biometrics (ICB), pp. 158-165, 2018.

Data split, positive/negative pairs

In the Table below, we show some basic information about the dataset used in the challenge. Note, positive/negative pairs are defined for validation and test sets, used in development and test phases, respectively. However, participants are free to create their own pairs to train their models, given the provided ground truth files and metadata.

| Train | Validation* | Test | Total | |

| Images | 100,186 | 17,138 | 35,593 | 152,917 |

| Unique identities | 4,297 | 614 | 1,228 | 6,139 |

| Positive pairs | - | 448,119 | 500,176 | - |

| Negative pairs | - | 552,672 | 500,963 | - |

* the list of validation pairs is provided within "validation_data" provided next, at val_data_e56ouf\val\evaluation_pairs\predictions.csv

Download links

You can download the dataset used in the challenge from the "Data" link on the left side of this webpage. By downloading the data, you agree with the Terms and Conditions of the Challenge. All files are encrypted! To discompress the data, use the associated keys provided below.

Encrypted keys to decompress the data are:

Train data: k59N4q5A

Validation data: FRHEsk53

Validation labels: uN9XV9AM

Test data: wqjdRJrC

Test labels: zV7zz9jz

WARNING: as detailed in the Challenge paper (preprint version), few samples have been removed from the test set after the end of the challenge due to wrong annotations. For this reason, the final validation and test sets provided above (on 8th Oct. 2020) are already without the wrong samples.

Data format

The data is organized as a single folder with images and an annotation file ground_truth.csv. Every image contains a loosely cropped face roughly in the center of the image. The annotation file is a comma separated CSV file. The first line is a header and the following lines contain annotation labels for the images (each line corresponds to one image). The format of the columns is as follows:

TEMPLATE_ID: Unique ID of the image. The file name of the corresponding image is [TEMPLATE_ID].jpg

SUBJECT_ID: ID of the subject

AGE: Age group: 0 is 0-34 years, 1 is 35-64 years and 2 is 65+ years

SKIN_COLOUR: 0 bright (Fitzpatrick types I-III), 1 dark (types IV-VI)

GENDER: 0 male, 1 female

HEAD_POSE: 0 frontal (easy), 1 other (difficult)

SOURCE: 0 image, 1 video frame

GLASSES: 0 no glasses, 1 or 2 glasses

BOUNDING_BOX_SIZE: 0 if both dimensions of the face are >224 px (big), 1 otherwise (small)

Statistics

In the following figures we provide basic statistics of the challenge dataset - the distribution of attributes and histograms of number of images per subject and face sizes (here represented as the geometric average of width and height of the original bounding box). Please note that the statistics of the individual splits (training/testing/validation) will be similar but not the same, because the testing and validation splits were generated to maximize their diversity.

Next, we provide example image pairs from the validation subset.

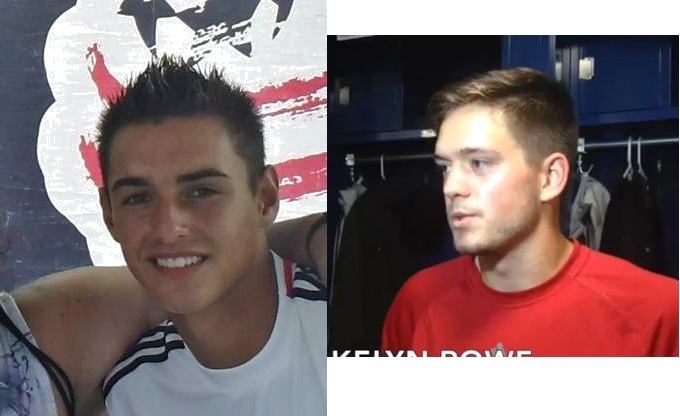

Positive:

|

|

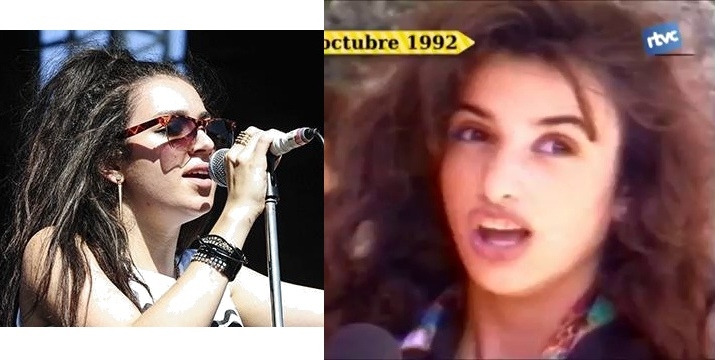

Negative:

|

|

The 2020 Looking at People Fair Face Recognition challenge and workshop ECCV are supported by International Association in Pattern Recognition IAPR Technical Committee 12 (IAPR) and European Laboratory for Learning and Intelligent Systems ELLIS, Human-centric Machine Learning program (ELLIS)

News

There are no news registered in Fair Face Recognition (ECCV'20)