2020 ChaLearn Looking at People workshop ECCV: Fair Face Recognition and Analysis

Workshop description

The list of accepted papers and video presentations can be accessed through the program webpage.

Summary

The accuracy of state-of-the-art face verification systems has surpassed the ability of humans to recognise other humans and it is improving every year [1]. However, datasets used for training and testing are often imbalanced and there have been numerous reports that the accuracy of trained models is significantly worse for people with gender and/or skin tones that are underrepresented in the data, hence rendering them biased [2,3]. The main objective of this workshop and its associated challenge is to advance into fairness in terms of bias mitigation in biometric/looking-at-people systems. To that end we propose an event to compile the latests efforts in the field and run a fair face verification challenge with a new large set of data and new fairness-aware evaluation metrics.

Topic and Motivation

This workshop will focus on bias analysis and mitigation methodologies, which will result into more fair face recognition and analysis systems. These advances will have a direct impact within society's equality of opportunity. In this proposal we plan to provide a comprehensive up to date review on fair face recognition and analysis research. We find of crucial interest to centralize ideas, discuss them and push the field to advance towards more fair systems for the good of society. Complementary to that, we will also contribute pushing research in the field by releasing a large annotated dataset for fair face verification and running an associated challenge. The main topics of interest related to fair face recognition and analysis are (but not limited to):

- Dealing with unbalanced and/or noisy datasets.

- Novel methodologies, metrics and algorithms for bias mitigation.

- Explainable/interpretable strategies for understanding/dealing with bias.

- Generative models applications.

Important Dates and Submission instructions

- 07/20/2020 Paper Submission

- 08/14/2020 Decisions to Authors

- 09/04/2020 Camera-Ready

Workshop date: Friday 28 August (Afternoon - Half Day - More details will be added soon on ECCV webpage)

Detailed information about the submission instructions can be found here

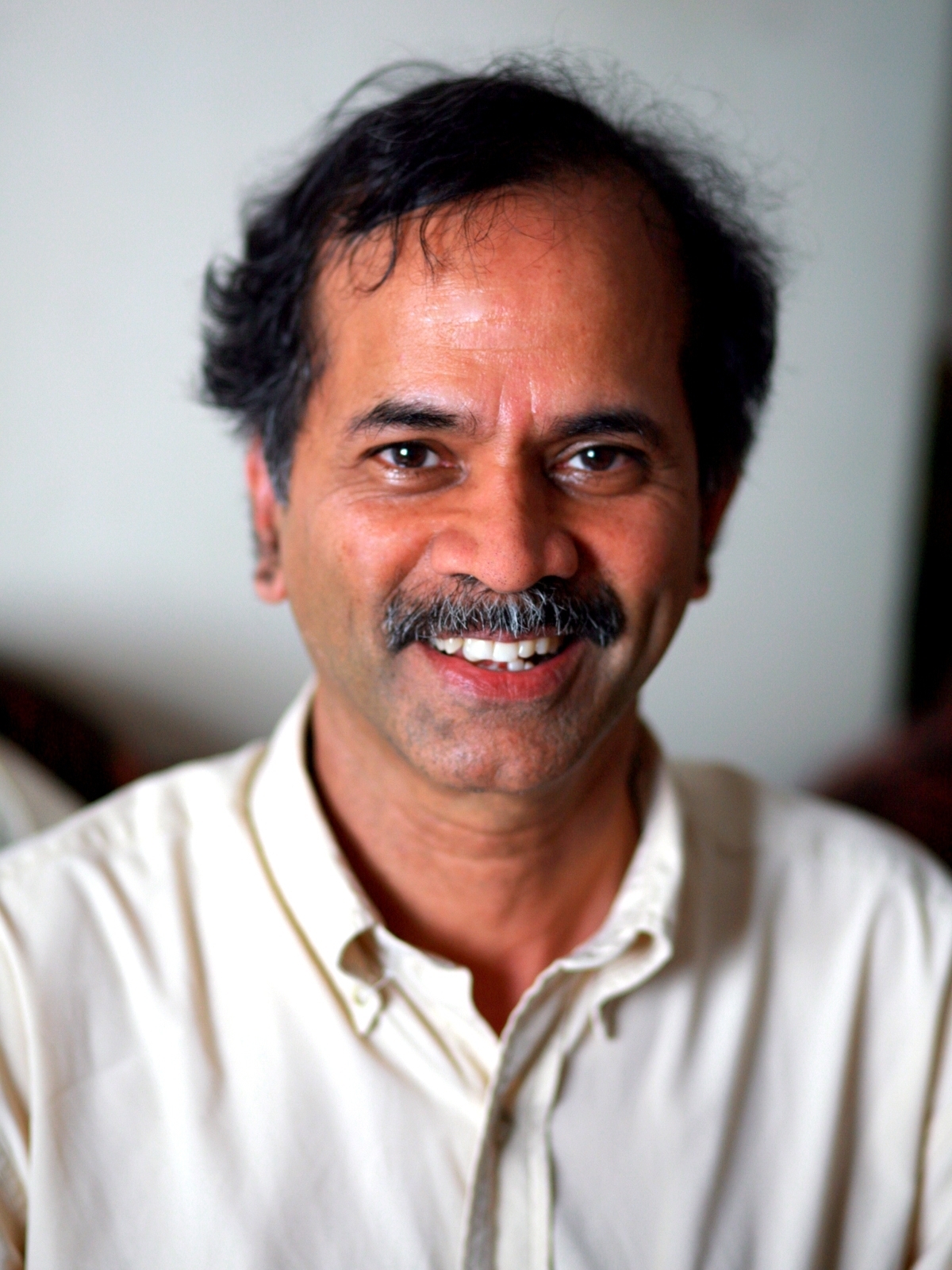

Invited Speakers

|

|

|

|

| Rama Chellappa | Kate Saenko | Matthew Turk | Walter J. Scheirer |

|

|

|

|

| Alice O’Toole | Dimitris Metaxas | Olga Russakovsky | Judy Hoffman |

For a detailed information about the invited speakers, click here

[1] Surpassing human-level face verification performance on LFW with gaussianface. Chaochao Lu, Xiaoou Tang; AAAI’15 Proceedings of the Twenty-Ninth AAAIConference on Artificial Intelligence Pages 3811-3819, 2015.

[2] Gender Shades: Intersectional Accuracy Disparities in Commercial GenderClassification. Joy Buolamwini, Timnit Gebru; Proceedings of the 1st Conferenceon Fairness, Accountability and Transparency, PMLR 81:77-91, 2018.

[3] Face Recognition Vendor Test (FRVT) Part 3: Demographic Effects. PatrickGrother, Mei Ngan, Kayee Hanaoka. NIST report, 2019.

[4] IARPA Janus Benchmark - C: Face Dataset and Protocol. Brianna Maze etal.; International Conference on Biometrics (ICB), pp. 158-165, 201

The 2020 Looking at People Fair Face Recognition challenge and workshop ECCV are supported by International Association in Pattern Recognition IAPR Technical Committee 12 (IAPR) and European Laboratory for Learning and Intelligent Systems ELLIS, Human-centric Machine Learning program (ELLIS)

Check our associated

ECCV'20 ChaLearn LAP Fair Face Recognition Challenge!

Samples from same subject ID, showing high intra-class variability in terms of resolution, headpose, illumination, background and occlusion.